A Practical Guide to AI Security: From Visibility to Data Protection

Many organizations are embracing AI, and it's easy to see why. Recent stats show that for every $1 spent on AI investments, organisations can expect to see $3.50 return on investment. Add to this, Microsoft recently published that users of Copilot are saving up to 30 minutes a day, or 10 hours a month.

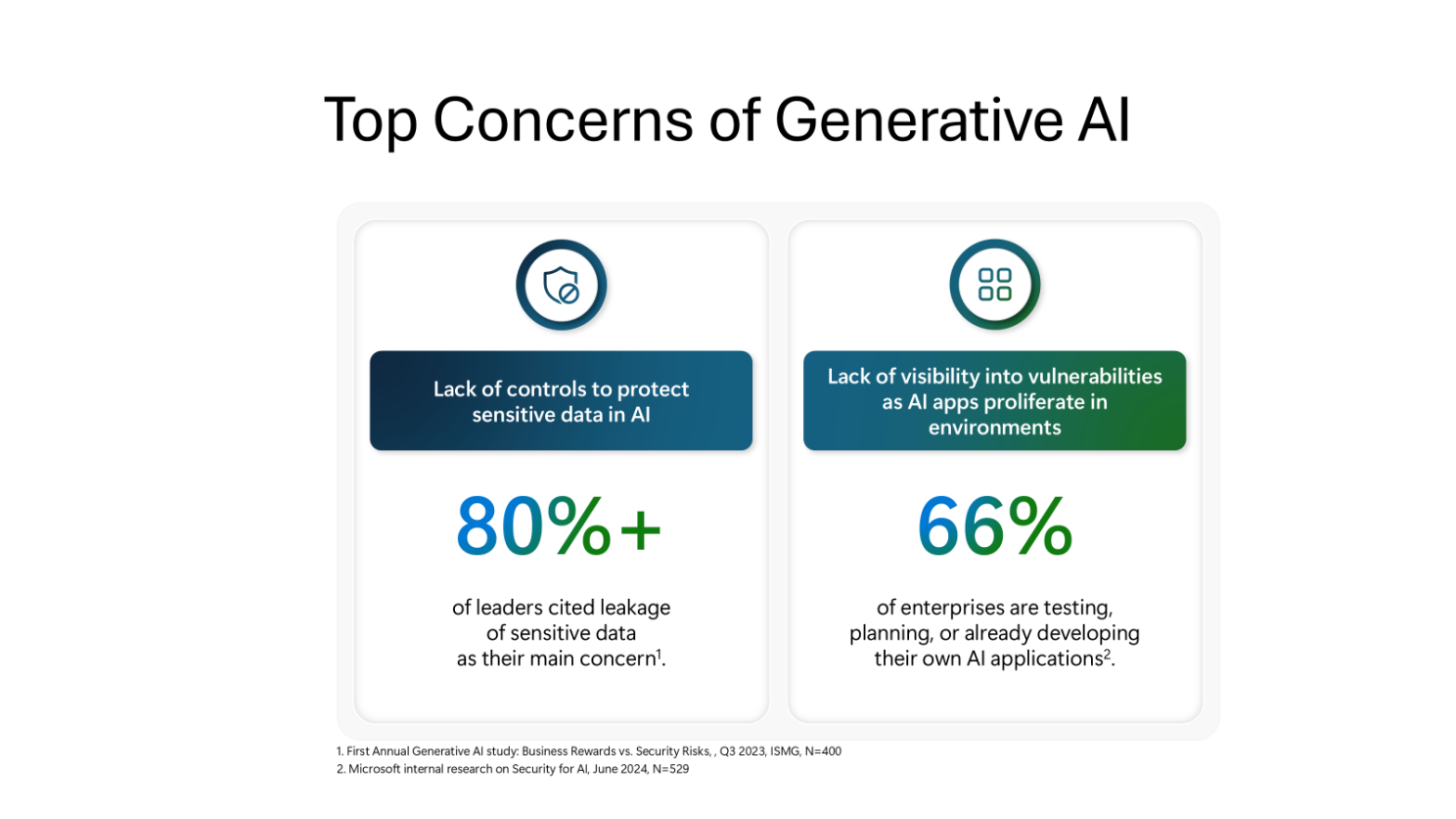

But, despite the excitement and clear business benefit, security worries often hold companies back.

When speaking with organisations, the concerns always come back to:

- Lack of control over how sensitive data is used in AI tools

- Not having visibility or control over AI apps being used in the organisation

We all know the risks, but figuring out how to assess and handle them in your business can be tricky. Many organisations struggle with this and don't know where to start. This guide is here to help.

What we'll cover:

- Understanding current AI usage & identify high-risk scenarios

- Govern & Manage the use of AI

- Keeping your data secure

Understanding AI Usage & Identifying High-Risk Scenarios

To make a plan, you need to know where you're starting. When assessing current AI usage many companies struggle with this, making it hard to tackle AI security risks.

First, you need to understand your current AI usage and assess the risks. Then, identify high-risk scenarios and create a plan to maximize AI's value while staying secure. This can help define a strategy for your organisation, starting with the most important areas first.

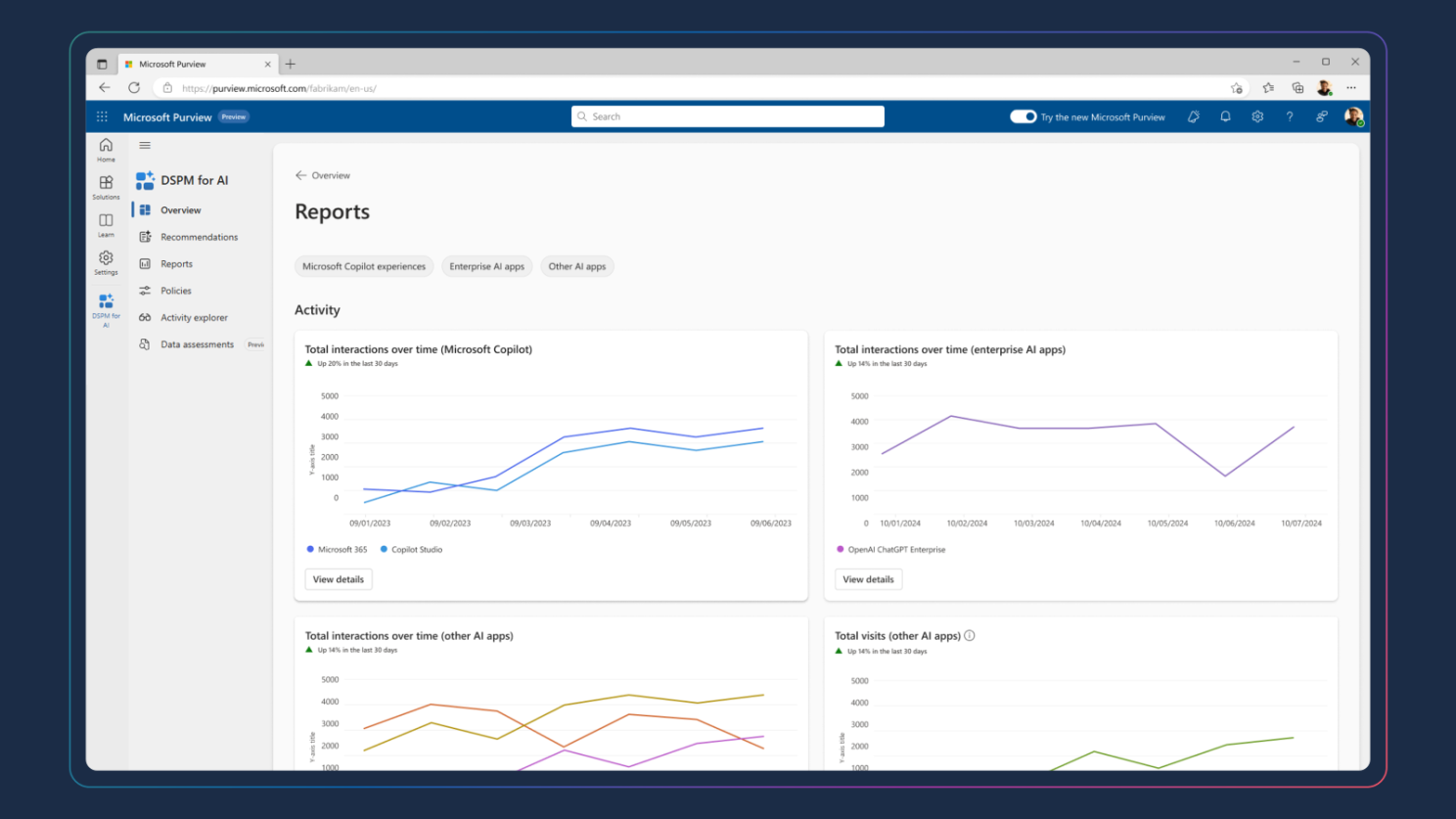

To help with this, in November 2024 Microsoft launched a number of innovations to help organisations with just this, including Data Security Posture Management (DSPM) for AI.

DSPM for AI monitors and assesses AI use in your organisation to identify vulnerabilities or risks. With this, businesses can gain visibility into where data is, how it flows, and who accesses it.

The insights provided give organisations a glimpse into AI usage in their organisation - whether that's sanctioned or unsanctioned AI applications - as well as the nature of data being leveraged within these tools.

This powerful insight allows organisations to understand how their employees are currently leveraging AI in their today and identify any potential risks, allowing for a tailored strategy to be devised to address these.

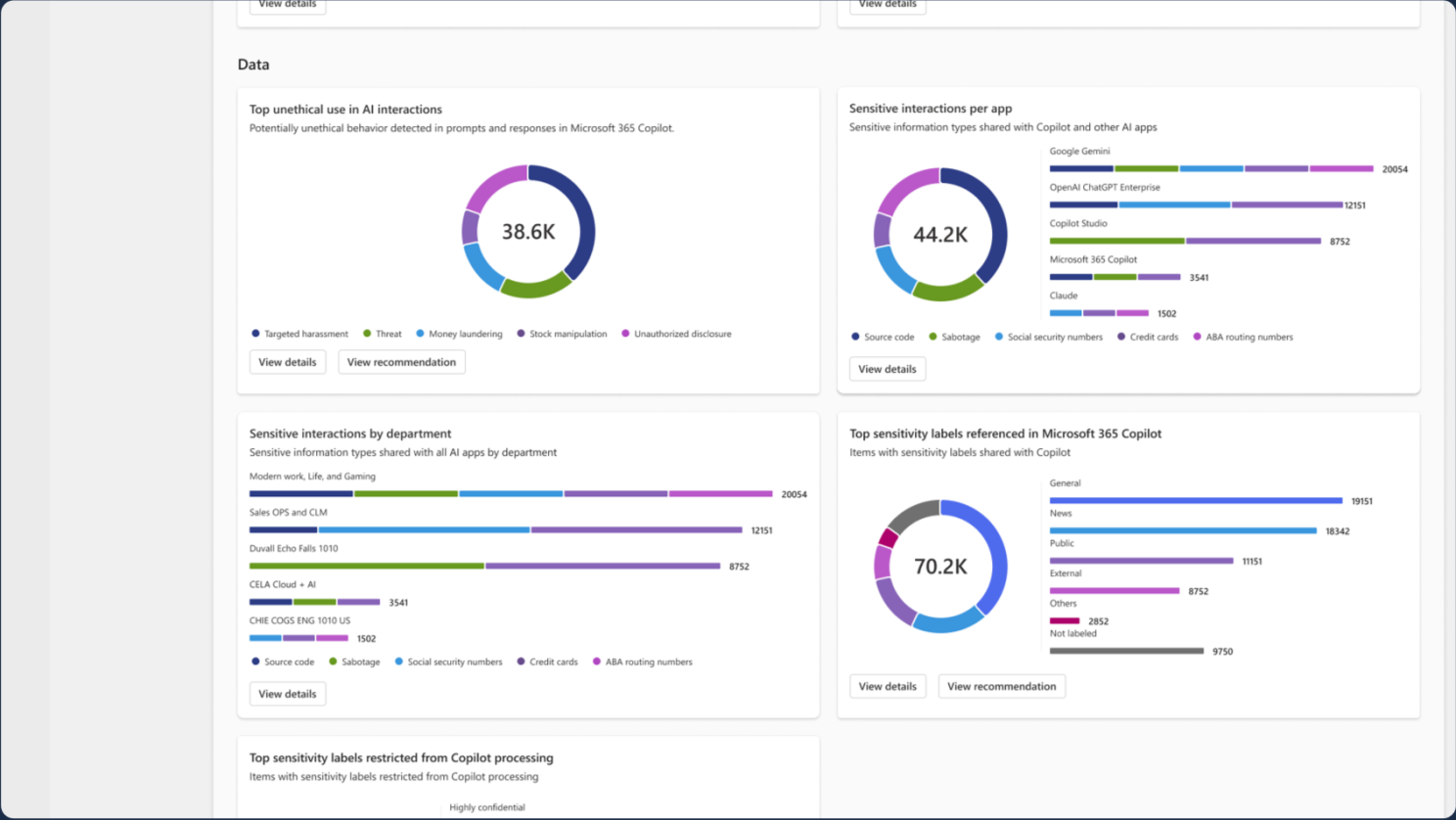

For example, in the above image we can see that a high-volume of prompts with credit card information is being included in interactions with gen AI apps. If any of these tools are not sanctioned by IT this may present a significant risk. This allows organisations to prioritise assessing the risk of these AI tools, perhaps locking them down if needed.

Now that we know the challenge, we can work on a plan to address it.

Govern & Manage the Use of AI

Not all AI apps are created equal. While many enterprise AI apps are secure, consumer-grade tools often lack robust security & privacy controls. The main concern boils down to what these tools do with your data and whether these tools use your data to train their models.

In 2023, Samsung were in the news for all the wrong reasons when they had sensitive data leaked and made accessible to anyone that used a particular AI tool. The reason? This AI app used Samsung's data to train their models.

To tackle this, many companies have an "Acceptable AI Use Policy" to set rules around AI usage and tool selection.

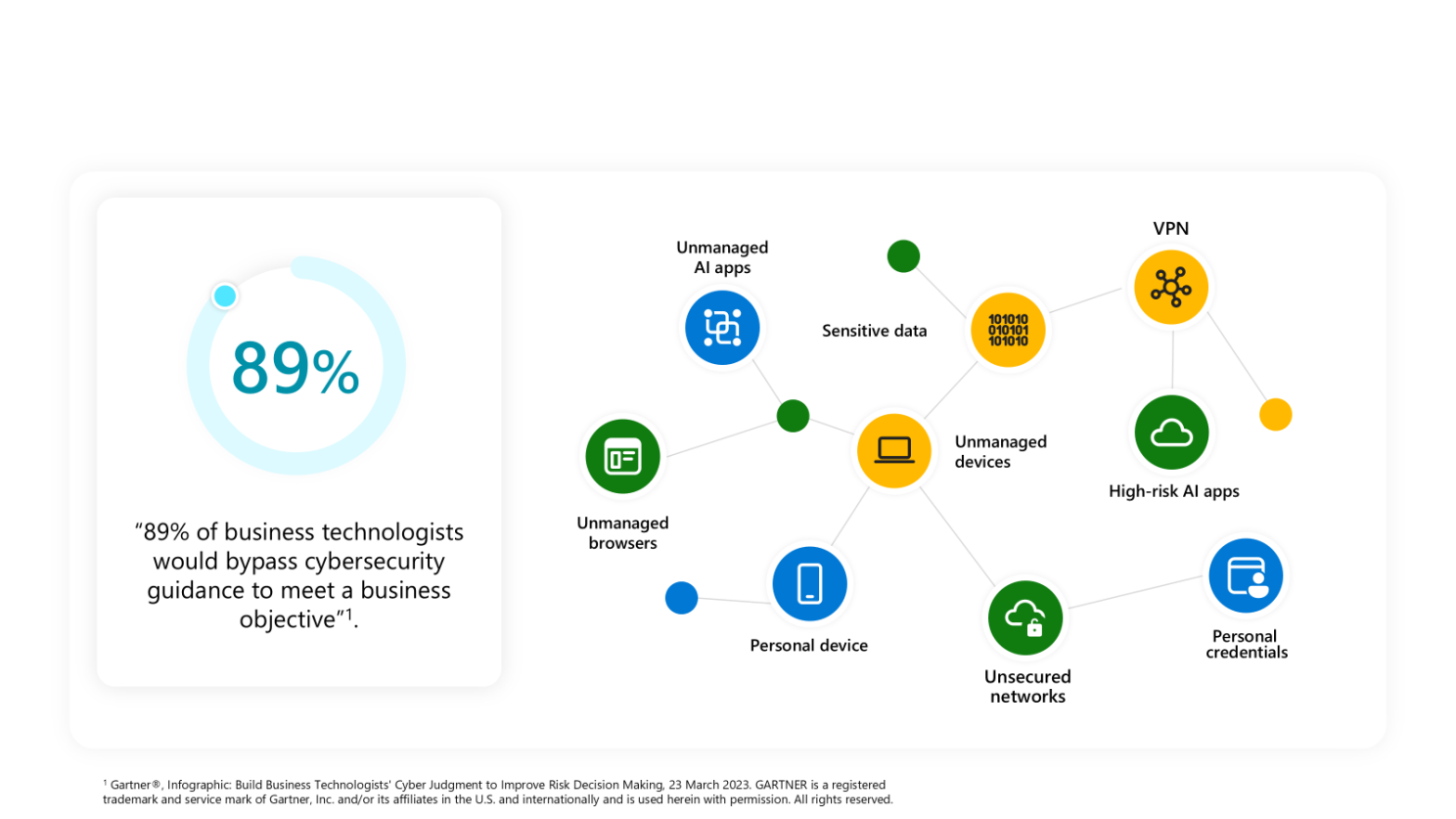

But given humans naturally look for the simplest route to achieve a task there is often challenges with adherence. In fact, according to Gartner 89% of "business technologists" would bypass cybersecurity rules to complete a task or objective. So, having a policy without enforcement is unlikely to be the most effective strategy.

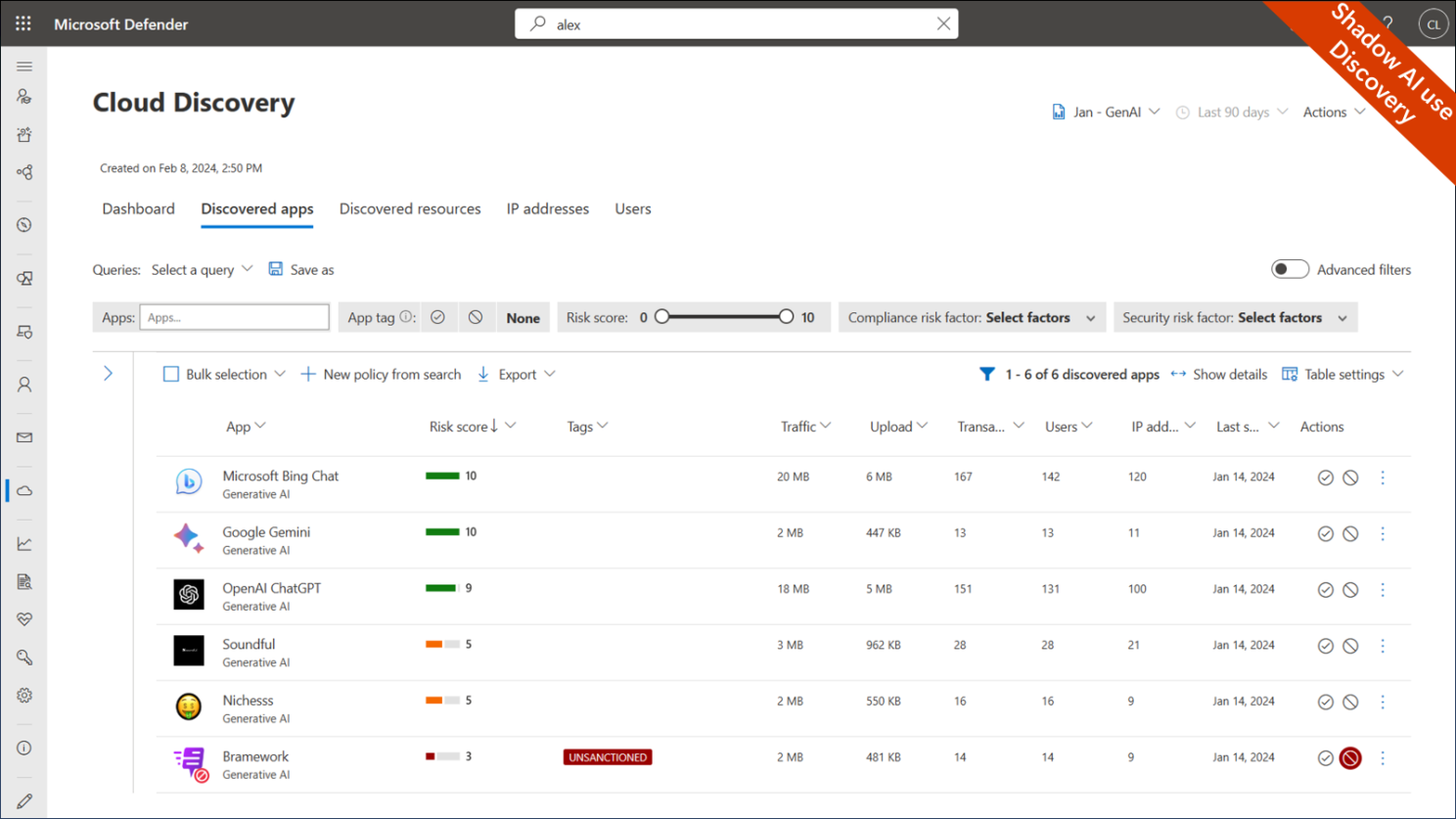

To address this, through the use of Cloud App Security Broker, such as Defender for Cloud Apps, organisations can govern the use of shadow AI in their organisation. The tools can be used to identify which AI apps are in use similar to DSPM for AI, with the addition of giving a risk score for this app, making it easy to spot high-risk usage.

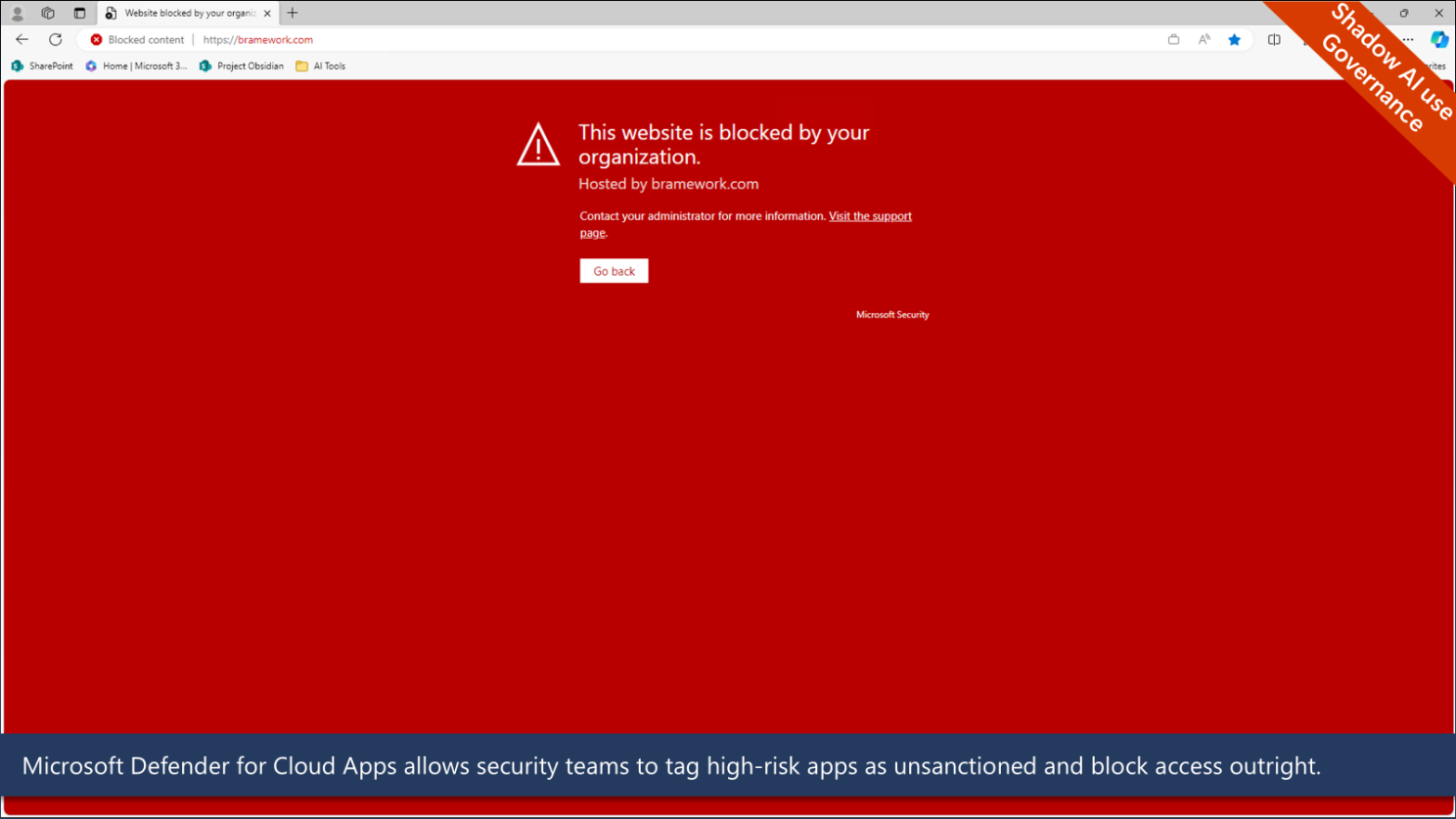

If an app is deemed risky or doesn't meet your organisation's security standards, you can tag it as "unsanctioned." This means the app will be blocked from being used.

Combining these governance & security controls with DSPM for AI, allows organisations to quickly identify and remediate risks from AI applications in their business.

Keeping Your Data Secure

While managing the AI applications your users can access provides a level of security, it does rely on you staying one step ahead of the user. With so many AI apps out there, it can feel like playing whack-a-mole.

To build on this secure foundation, the final and most important step is to apply security controls to your data at source. This ensures sensitive data stays safe, no matter how users interact with it. Purview Sensitivity labels can help with this.

Combining sensitivity labels and Data Loss Prevention (DLP) policies secures sensitive data and prevents it from being used in AI apps.

Purview sensitivity labels allow businesses to classify data according to its confidentiality level - whether that's public, internal, confidential, or highly confidential. With each label, organisations can apply security controls including the prevention of sharing in 3rd party AI applications, sharing with external users or sharing it in non-IT managed applications. This allows organisations to prevent their data from being exfiltrated, whether that's malicious or accidental.

Let's look at how this works in action.

In the below clip a user is accessing a document that is tagged under sensitivity label "Confidential/Project Obsidian". This user then tries to take this document and paste the information into ChatGPT. However, as this document is confidential the DLP policy kicks in and prevents the user from accidentally sharing sensitive information with an untrusted AI app.

Conclusion

AI offers incredible opportunities, but it's essential to tackle the security risks head-on. By understanding how AI is used in your organisation, assessing the risks, setting up governance policies, and securing your data, you can make the most of AI without worrying about security issues.

Tools like Microsoft's DSPM for AI and Defender for Cloud Apps can help you keep track of AI usage and manage risks effectively, with Purview Sensitivity labeling providing that final layer for robust security & governance.

Curious about how to implement these strategies in your organisation? I'm more than happy to have a chat. Reach out to me, and we can discuss how to make AI work securely and effectively for you.